Summary

AI code review has moved through three stages: explaining issues, suggesting fixes, and applying them. The fourth stage, autonomous remediation without developer initiation, is now in early production deployment.

The DORA 2025 State of AI-Assisted Software Development report found AI amplifies existing organizational dynamics. In teams with strong CI/CD practices, it accelerates delivery. In teams with brittle processes, it exposes bottlenecks more acutely. Tooling without process change does not improve outcomes.

The tools that earn lasting adoption are not the ones that find the most issues. They are the ones that are right when they flag something, and resolve it before routing it back to the developer.

Engineering leaders making tooling decisions today are effectively choosing which review architecture they will be running in 2027. The category is moving fast enough that the right choice now is different from the right choice eighteen months ago.

What is AI code review?

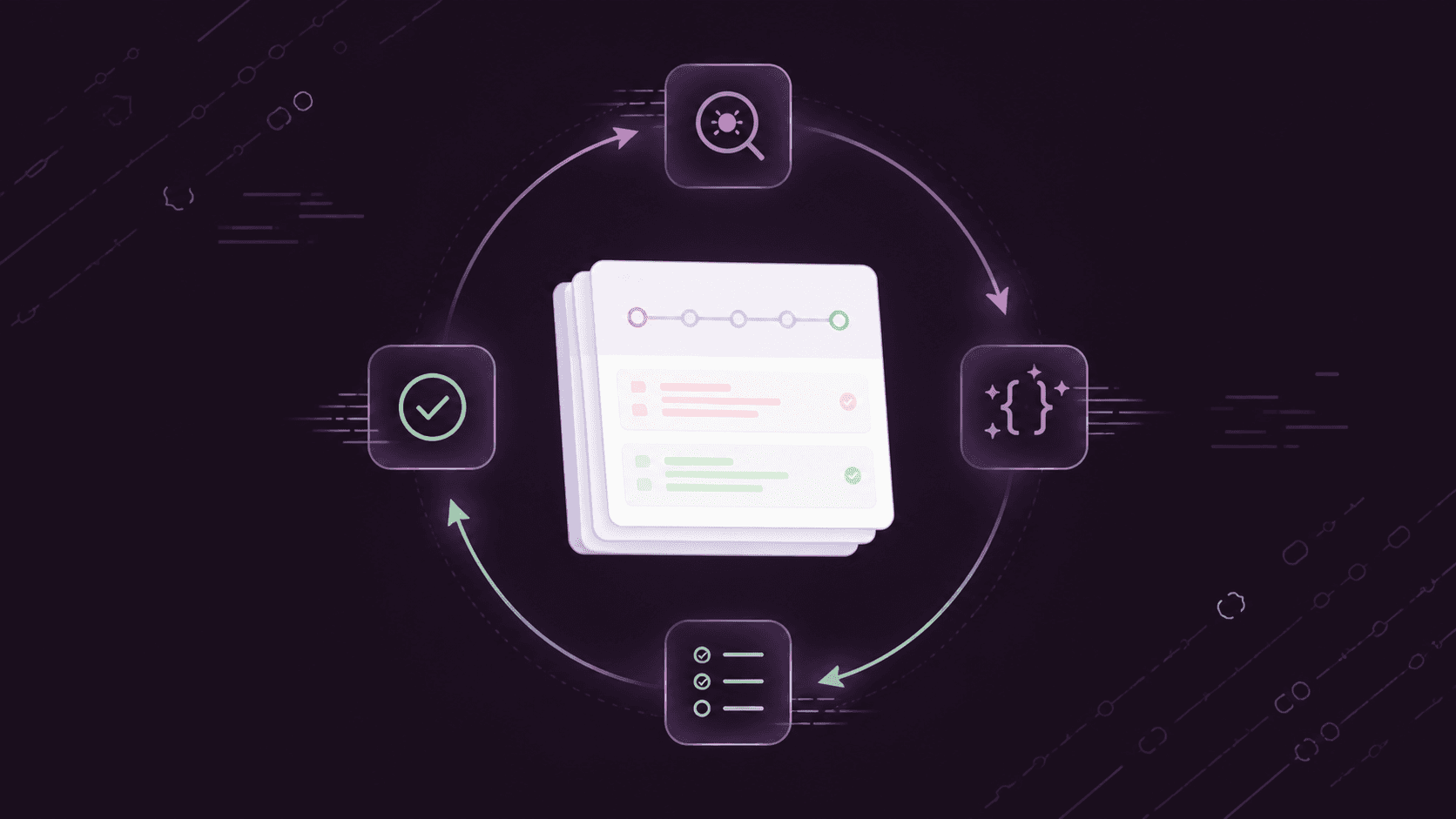

AI code review is the use of artificial intelligence to automatically analyze code changes for bugs, security vulnerabilities, quality issues, and standards violations as part of the pull request workflow. Early implementations explained lint output in natural language. Current tools generate and apply fix suggestions directly on the PR. The category is moving toward autonomous remediation, where the system detects an issue, applies a validated fix, and confirms it against the CI pipeline without a developer initiating any step in that sequence.

The future of AI in code review is not a single capability. It is a direction, and the direction is legible from how the category has already moved.

Three eras, and the one we are entering

The first era of AI code review was explanation. Tools turned cryptic static analysis output into natural language descriptions of what was wrong. The improvement over a raw lint report was real, but the developer still did all the work.

The second era was suggestion. Tools proposed the fix alongside the finding. The developer still had to evaluate it, decide whether to apply it, check out the branch, and push. The review cycle shortened at the analysis stage and remained unchanged at the resolution stage.

The third era, which consolidated through 2025, was one-click application. The tool generates a commit, the developer approves it in the PR interface. The round-trip between comment and resolution collapsed significantly.

The fourth era is autonomous remediation: the system detects, fixes, validates against CI, and closes the loop without the developer initiating any step. This is not a future prediction. Several platforms are in production deployment on this capability now. The question for engineering leaders is not whether this era is coming but whether their current tooling choices position them to adopt it cleanly when it becomes the category standard.

What limits current tools, and why it matters

Current automated code review tools handle the mechanical layer reliably: syntax errors, known vulnerability patterns, formatting violations, and common anti-patterns. What they handle poorly is the class of problems that require understanding what the code is supposed to do rather than what it matches against a known signature. Architectural judgment, business logic validation, and context-dependent security issues remain outside the reach of annotation-based tools.

The Cloud Security Alliance reports that 62% of AI-generated code solutions contain design flaws or known vulnerabilities. The code looks clean. The vulnerabilities arise from missing context that pattern-matching cannot supply. That gap will not close through more annotations. It closes through reasoning about the code's intent.

The adoption failure mode for current tools is well-established: a tool that generates many findings per PR, including low-confidence, irrelevant, or stylistic observations, trains developers to dismiss everything it produces. The signal collapses faster than the tool can recapture it. Precision sustains adoption; coverage does not.

Three forces reshaping the category in 2026

CI-native intelligence

A review tool that reads only the code diff cannot determine whether the change breaks a downstream dependency, introduces a flaky test, or passes CI for the wrong reason. The next generation of tools operates inside the CI/CD pipeline with access to build logs, test results, and failure history. That access is what makes autonomous remediation possible. Without it, the tool is making decisions about code it has never seen run.

Natural language policy enforcement

YAML-based pipeline configuration is fragile and inaccessible to most of the engineering team. Natural language policy enforcement, where teams define quality gates and code standards in plain English and an agent enforces them on every PR, moves policy ownership from the DevOps lead to the full team. Review standards become visible and editable by everyone with a stake in them.

AI as amplifier, not substitute

The 2025 DORA State of AI-Assisted Software Development report, based on surveys from nearly 5,000 technology professionals globally, found something practitioners have long suspected: AI amplifies existing organizational dynamics. High-performing teams with mature CI/CD practices see AI accelerate delivery. Teams with brittle processes see AI expose those brittle points more acutely. The implication is direct: tooling decisions compound existing organizational strengths and weaknesses. The right investment in review infrastructure is the investment that removes the constraint, not the one that accelerates the part that is already moving.

Gitar: built for where code review is going

Gitar is an AI code review and CI/CD automation platform that operates inside the CI environment: reading build logs, classifying failures, applying fixes, and iterating until the pipeline is green. Every PR gets a single living overview comment, updated as code changes, never duplicated. Inline suggestions address bugs, code vulnerabilities, and standards violations with working corrections applied directly to the branch, validated against CI before they surface. Natural language workflow automation lets teams define policies without bespoke configuration.

FAQs

What is the future of AI in code review?

The trajectory is from annotation to autonomous remediation. Tools have moved from explaining issues to suggesting fixes to applying them. The next stage, now in early production deployment, is fully autonomous remediation: the system detects, fixes, validates, and closes the loop without developer initiation.

Will AI replace manual code reviews?

AI will handle the substantial majority of code review by default, with human reviewers handling the exceptions: architecture decisions, security-critical logic, and changes where the stakes warrant explicit sign-off. The relevant question for engineering teams is not whether to automate review, but how to configure the boundary between what runs autonomously and what gets escalated.

How does AI improve CI/CD workflows?

At two points: at the PR stage, by catching issues before they enter the pipeline; and at the failure stage, by classifying failures, identifying root causes, and applying fixes without manual investigation.

What are agentic AI systems?

Agentic AI systems execute multi-step tasks autonomously toward a defined goal. In code review, an agentic system receives a failing CI build, identifies the root cause, applies a fix, re-runs tests, and opens a corrected PR without developer intervention at each stage.

How should teams evaluate AI code review tools?

Start with the PR experience. Signal-to-noise ratio determines whether developers trust the tool or learn to ignore it: a tool that generates low-confidence findings at volume trains engineers to dismiss everything it produces. Then assess CI integration depth: tools that analyze only the diff and tools that operate inside the CI/CD pipeline with access to build logs solve different problems. For teams with growing AI-generated code volume, CI/CD automation-native tools that apply validated fixes are the operationally relevant category.